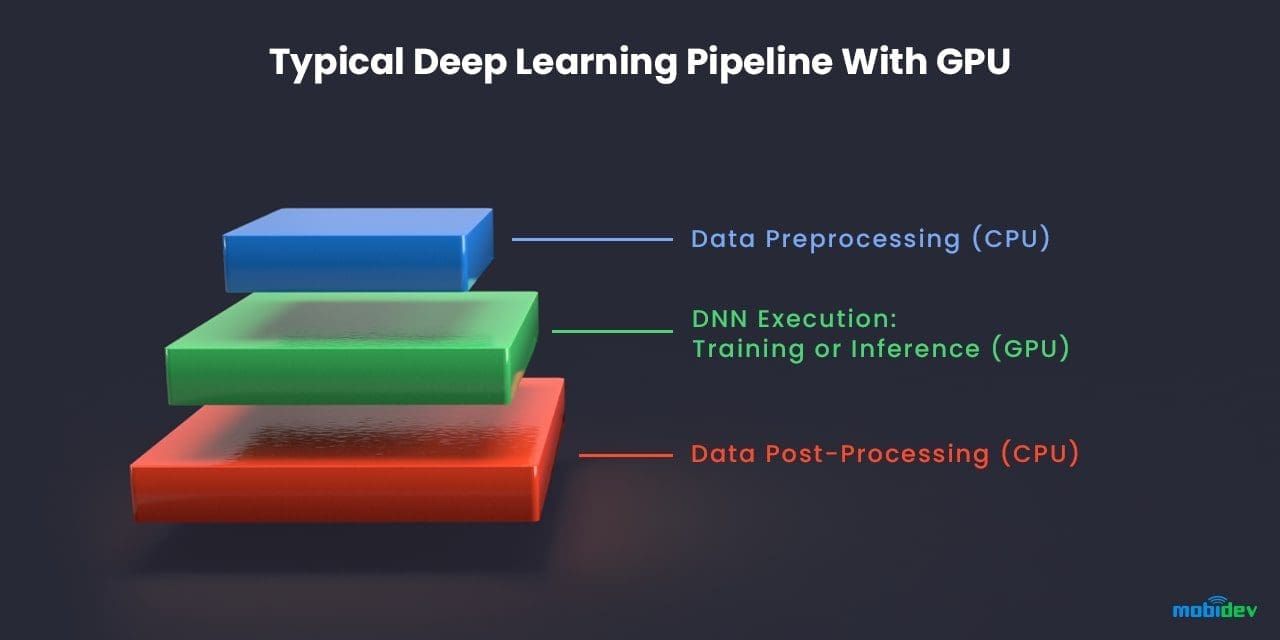

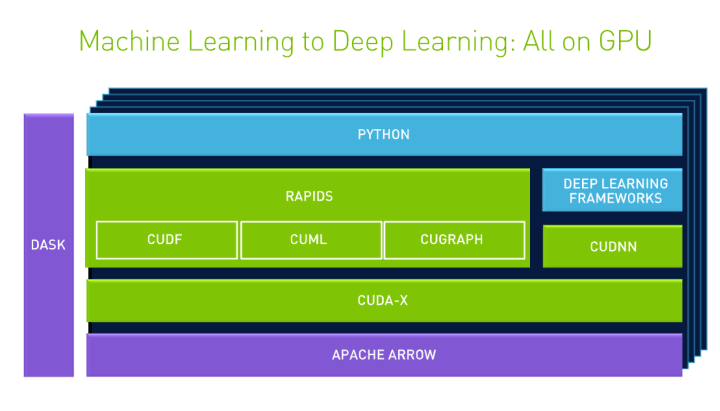

How to use NVIDIA GPUs for Machine Learning with the new Data Science PC from Maingear | by Déborah Mesquita | Towards Data Science

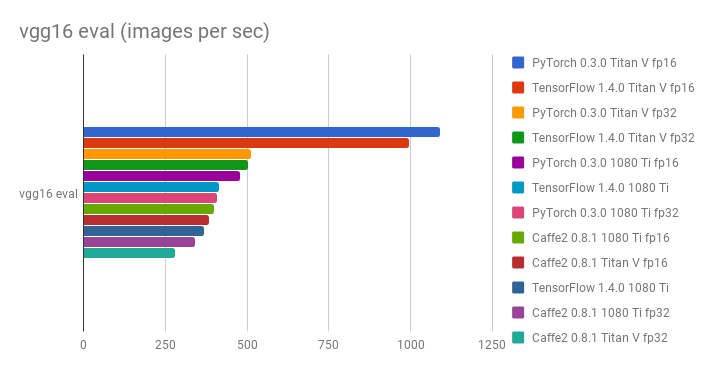

GitHub - u39kun/deep-learning-benchmark: Deep Learning Benchmark for comparing the performance of DL frameworks, GPUs, and single vs half precision

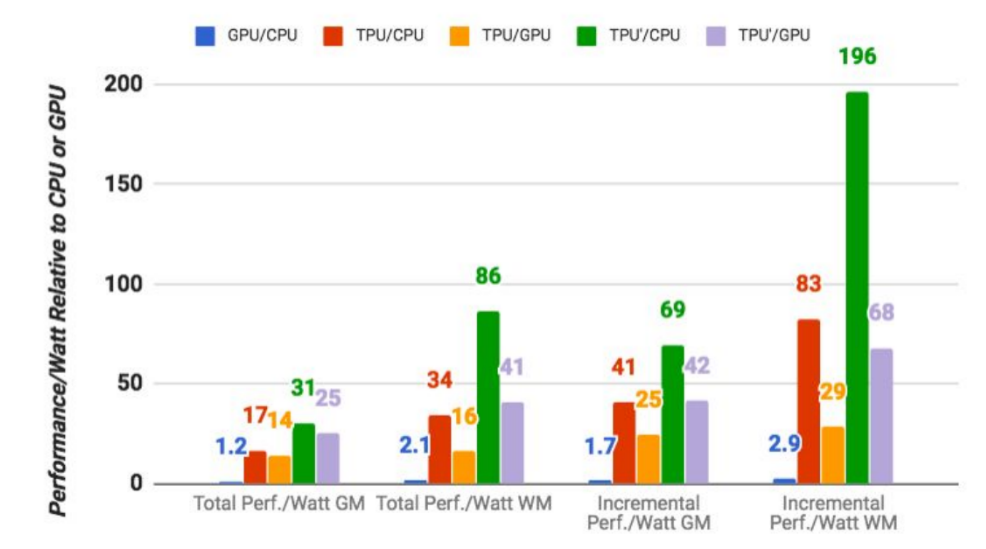

Google says its custom machine learning chips are often 15-30x faster than GPUs and CPUs | TechCrunch

![D] Which GPU(s) to get for Deep Learning (Updated for RTX 3000 Series) : r/ MachineLearning D] Which GPU(s) to get for Deep Learning (Updated for RTX 3000 Series) : r/ MachineLearning](https://external-preview.redd.it/5u8jDdRCaP-A20wEDShn0DFiQIgr2DG_TGcnakMs6i4.jpg?width=640&crop=smart&auto=webp&s=2414712edeae55687697e6a27535d58f952dc5ba)